Design Overview

I admit I didn't 'design' the system, I let it evolve from ideas and previous designs. This description therefore documents what evolved. I might even find things I don't like, or inconsistencies where my thinking changed part way through. Either way it's going to be interesting to actually explain what I did and then try and justify it or suggest to myself that I change it!

the building blocks

The system is a component system. I love components. Get them at the right granularity and they are reusable building blocks for the class of system, in this case an SDR.

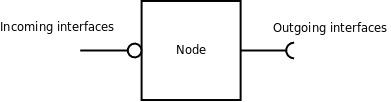

The fundamental building block is a Node.

This is where the name ACORN comes from (a Collection (or Collaberation) of Radio Nodes). A node is a process, that is a separately executable piece of code, an .exe if you like. That is not to say a node can just be run on its own, well, it can be run, but is unlikely to be useful on its own. Several nodes are needed to make a radio, but more on that later.

A node has incoming and outgoing interfaces.

- Incoming interfaces are provided by the node, it is a provider of those interfaces which are available for others to use. You could say that the node provides services that other nodes can connect to.

- Outgoing interfaces are interfaces that the node uses in order to complete the tasks requested of it.

It is reasonable to think of the system as a network of nodes connected to each other. The incoming interface at the head of the net could be a user or some automated process and the outgoing interface at the end of the net is probably going to be to some hardware.

The protocol is TCP/UDP between nodes so machine boundaries can be crossed.

The first node

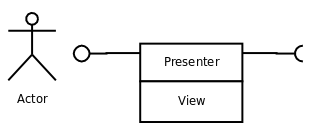

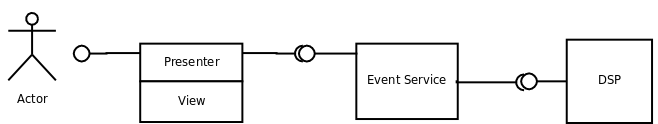

Lets consider a real node, actually part of a node. The User Interface Presenter.

The User Interface has to be considered in two completely separate parts. I've borrowed some terminology from a user interface pattern which is generally known as MVC (Model, View, Controller) or the slightly modified MVP (Model, View, Presenter). The presenter part represents the interactions of a user with the controls of the interface, pressing buttons, scrolling digits etc. Each and every interaction generates messages on one or more outgoing interfaces. At the moment the generator of messages must know which interface to hit with the message. This is a simplification of what I have done in previous systems where there was an intermediary that would know who to forward particular messages to. I've not found this more direct apporoach to be problematical so far. The presenter side of the user interface is therefore pretty simple. In essence it turns the user interaction into a message to an interface. The presenter does not know or care who, if anyone receives the message. The presenter never talks to the view directly. Lets follow a typical interaction through in the next few sections. Lets say the user clicks a mode button to change mode from LSB to USB.

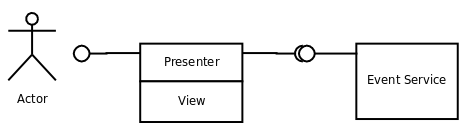

The intermediary

I said there was no intermediary. I lied slightly, it's not an intermediary that understands the message being sent or has knowledge of who to forward it to. This intermediary is a publish/subscribe service. It's just another node with incoming and outgoing interfaces but it plays a special role in the system.

This publish/subscribe service is usually known as an event service. It is a VERY important part of the system. Every control signal in the system goes through this service. Even though the presenter knows what interface the mesage must be sent to it has no idea if anyone is listening on that interface. The event service decouples the sender and receiver such that they don't need to know about each other. All the senders need to know about physically, is the event service, because that is where it sends messages to.

Publishing and Subscribing.

- Publishing is what the sender does, in this case the presenter. In order to publish messages to an interface it must inform the event service that it is a publisher on that particular interface. This happens during initialisation of the user interface node. It stands to reason then that all radio nodes will depend on the event service node, so in the startup order (remember the event service is just an executable) it must be started first. As far as the presenter is concerned, that's it, the message to change mode is sent to the event service and job done.

- Subscribing is what the receiver does, as follows...

introducing the Signal Processing node

Lets introduce the receiver of this particular change mode message, the DSP.

The DSP is a subscriber to this message. It must provide an interface which includes the 'change mode' message. Just as with the publisher it must tell the event service that it subscribes to the interface that the presenter is publishing the change mode message to. When the system is starting up, most nodes will be publishers AND subscribers and some will just be one or the other. That is how the system connects itself up and behaves like a radio from just a collection of nodes which are instantiated as the system starts up.

I hope this is beginning to paint a picture because there are a number of useful consequences of connecting the system up in this way.

interfaces

Before discussing the 'useful consequences' a little, it is nesessary to understand what an interface is. It might be pretty obvious but I don't want to assume any knowledge.

An interface is something that exists between two entities. It might be between a user and an application, in this case its a visual interface and the interface is constrained by what the user can do to the application. It might be between software and a piece of hardware in which case its constrained by what the hardware can do. These are interfaces that everyone is familiar with. The interfaces within a system are usually much more difficult to discern. If its a single monolithic application it may be almost impossible to sort out where the real boundaries are between the different functions of the system. In many cases the boundaries are pretty grey with function not always on the correct side of the boundary.

A component system must have clearly defined boundaries and properly defined interfaces for it to work at all. The interfaces stand out and effectively define the system. The interfaces between acorn components are defined in a language independent way. It is important to realise that the interface is completely separate from the implementation. The node must provide concrete implementations for the interface(s) it implements. The DSP node for example implements two interfaces. The first is a life-cycle interface which every node must implement, this provides a way to start, stop and terminate the node. The DSP also implements the functions of the DSP of which 'change mode' is one. By just looking at the interfaces definitions a good understanding of how the system works can be gleened, no need to look at code to discover how the system is partitioned and what each part can do.

useful consequences

There

are a number of ways in which a loosely coupled system helps to keep

the system sane (and the people coding it) throughout its life.

- Dependencies in software systems can be a real pain, especially if they are circular. That is, A wants to connect to B and B wants to connect to A. When instantiating such a system it can be quite tricky to get it to start cleanly. The more nodes and more connections there are the harder is gets. By decoupling everything through an event service only the event service needs to be started first and the order of everything else is irrelevent because there are no direct connections except to the event service. You might find later that I lied slightly again but in the main its true.

- So far we have only looked at one simple connection but the real power is having multiple publishers and subscribers for the same interfaces. It will become more obvious how this make for a flexible system in subsequent sections.

- As each node is a separate executable, nodes can be instantiated and terminated live on the system. A new node started will receive messages and can send messages as soon as it registers itself with the event service.

- The implementation behind an interface can be changed at will and different profiles (see later) will start different nodes behind the same interfaces.

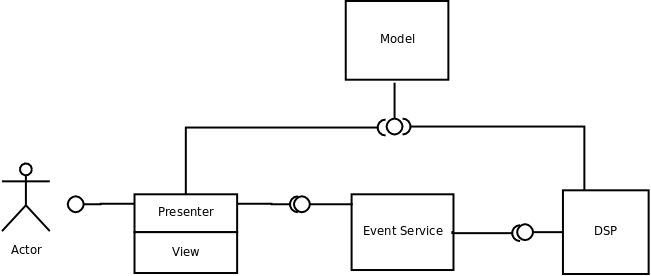

The model

The model is an important part of the overall system, nothing will work without a model.

I did say there was a little bit of lying going on about everything going through the event service. The model does use the event service but that is for live updates in the system. More on that later. For now, the model is a node like any other with an interface that supports a number of functions to do with data storage and retrieval.

All systems have a model of some sort, its often some database tables that everyone dips into, sometimes with an access layer built on top. It can be XML which is often treated in much the same way as a database. The problem with doing things that way is that there may be no abstraction between the application and the data. Sure, you can build in an abstraction layer and many applications do. But there is still a problem, what if you want to run the DSP and the GUI on different machines. They both need access to the model, you might have a database server on the network, or replicate the database across machines (in real time?). It starts to get messy and complicated.

The acorn model is just a node and it understands what profiles, capabilities and dynamic state data are (see the descriptions below) and provides a service to all who require it across machine boundaries if required. No one cares how the data is held, persisted or restored.

The model is the second node to be started after the event service. However, the bootstrap process which starts everything up will try to connect to a model in case there is one running already on another machine (two models would cause a lot of confusion!). All nodes when they start connect to the model and pull off any data they require.

To make the picture more complete, as we are on the subject, here is a little bit about the model.

Philosophy

Going back to the first few sections when talking about the MVC and MVP triad in user interface design. In conventional MVP the model is the thing that is changed by the presenter. The fact that the model is changed would cause it to send those changes to all views that are registered to be notified of those particular changes. So the UI would update as a result of something in the model changing. Acorn has a different pattern which will be explained as we look at how the views are updated. The model however is changed by the presenter (usually) and at times can be changed by the view. The model is always up to date with the current state of the system.

Other nodes connect to the model and pull data they require from it - with one exception - if the change is to static data, i.e. configuration or options data and that data is marked as immediately updated (in the live system) then the model sends a notification through the event service and there is , guess what, an interface where nodes receive those notifications.

interface

The model is slightly different in the interface it supports. There are potentially 10's or 100's of attributes in the different parts of the model. In general the interfaces are type safe. This means if you call an interface method which is not there or with the wrong parameters the system complains. With the model it would be an unacceptable overhead to maintain a very large and constantly changing iinterface (during development one changes the model often). The model interface therefore is kept to name value pairs, the value is typed and can be boolean, integer, float, string or list. The model knows what is the current selected profile and therefore capability.

performance

The model is updated constantly, and data not available through the event system or a node just starting up will pull the data it needs from the model. Performance therefore needs to be good. The model is implementaed as a number of dictionaries which are restored from disc, held in memory during the session and written back to disc on termination. The read/write performance is therefore pretty good.

persistence

Model data has to be persistant to be useful. A simple scheme is used, the model is a number of Python dictionary objects that are serialised to and from disc. It's simple, elegant and fast. The model can be updated in the Python source very quickly and easily and also much of it is available through the GUI in the options panel. There is a simple version mechanism but more work in that area is needed.

The parts of a model

There are three distinct parts to the model; profile, capability and dynamic.

- Profile is the static data or options associated with a particular set of nodes that make a particular radio. There are about 10 different profiles in the system at the moment for SDR1000, Softrock and HPSDR with different DSP's etc. Profiles can have different attributes. The UI options panels are designed to be a superset. If the attribute is not available in the profile then the associated field is greyed out.

- Capability is what the radio can do as a result of the profile that was started. For example a simple DSP might have no oscillator or a limited set of modes and filters. A Softrock may have no VFO. Each profile links to a capability and that capability configures the UI to only enable controls that are valid for the profile. It may also change the way things work.

- Dynamic is the radio state. Each profile has separate dynamic state as it would be fraught to have the same state for all radios.

We are now ready to look at how views are updated.

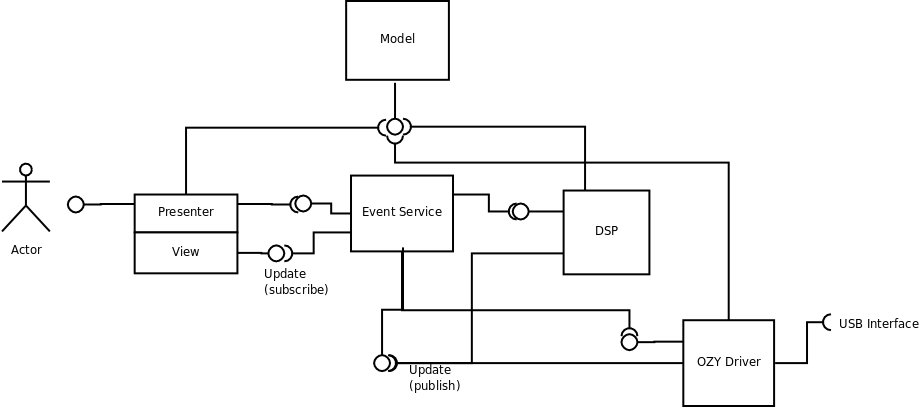

updating the GUI

This section will complete the circle of events and hopefully complete the picture at an overview level. There is of course much detail I have missed out and there are a lot more nodes that comprise the full system but that will be part of the design detail pages.

The picture is starting to look a bit more complex, but just keep in mind everything works the same way. So no matter how many node and connections are shown it's just more of the same.

We got as far as the user selecting a new mode. That generated two actions by the presenter, it sent a change mode message to the event service on the DSP interface. and it updated the model by sending a message directly to it. The event service on receiving the message looks to see if it has any subscribers registered on that interface. It has so it forwards the message to the DSP. The DSP changes mode and then...

This is where the pattern is somewhat modified from the usual MVP. I'm not totally convinced this is correct yet but it works, although I might modify it as some point. I'm considering all the other nodes out there as being a surrogate model to the UI. As the model itself does not really know what it's doing with respect to individual attributes changed i.e. the mode for vfo-a is changed for profile xyz just comes across as a string 'modea' to look up in the dictionary. It would need more context to know what to do with that to update the GUI (or potentially any one else interested in updates). The DSP on the other hand knows exactly that it has been told to update its mode. It can respond by publishing the new mode to the update interface. Therefore, the receiver of the message sends the update, this could be the DSP for a mode change or say for a frequency increment message in an HPSDR system the OZY node would respond with the new frequency by publishing an update.

A frequency increment

Think about that frequency update for a moment. The message from the UI was to increment the frequency by say 100Hz. Remember the presenter just interprets the user interaction and turns it into a message, thus scrolling the 100Hz digit up by 1 will send a frequency increment of 100Hz. The OZY node receives this, adds it to the known current frequency and then checks it against the capabilities (yes, other nodes look at capabilities too). If the frequency is within the limits of the device it will update the frequency of Mercury, through the OZY interface and then send an update message, not of the increment, but of the new frequency that has been set. The Ui view is not interested in increments, it just wants to know what frequency to set its digits to. If the frequency fell outside the capabilities the OZY node will simply ignore it and therefore the UI view will stay as it was. Remember I said that sometimes the view updates the model. This is one case where the model update is the views responsibility. We don't want to go updating the model if thefrequency was outside the capabilities and as a result, no change was made. The OZY node can not update the model, it does not have the context to know what its doing. The frequency might belong to vfo-a or vfo-b. The OZY node has no idea about vfo's, only the UI knows about vfo's so only it can update the model correctly.

Multiple UI's

The diagram shows only one UI. The current system supports sub-receivers as provided by the magnificant DttSp. Although sub-receivers can all be run from the one UI it makes better sense to have a UI per receiver. Any UI can send messages and all will receive updates. There are some complications with sub-receivers that means not all updates are relevent to all UI's, you don't want RX1 changing its frequency because the RX2 frequency was changed. These issues are handled quite naturally by updates being sent for which receiver is currently listening. A UI that is set to the listening receiver will action the updates but ignore others that are sub-receiver specific.When a UI is switched to a different sub-receiver it restores that sub-receivers state from the model to set its initial state.

whats next

The overview will be extended to cover the data paths as the above only covers the control paths. I am also considering a DIY section to build a new node, which is actually not that hard as most of the connection stuff is boiler plate code. The system is Python except for DttSp and OZY interface drivers so its incredibly easy to work with. You don't even need an IDE, a text editor will do.